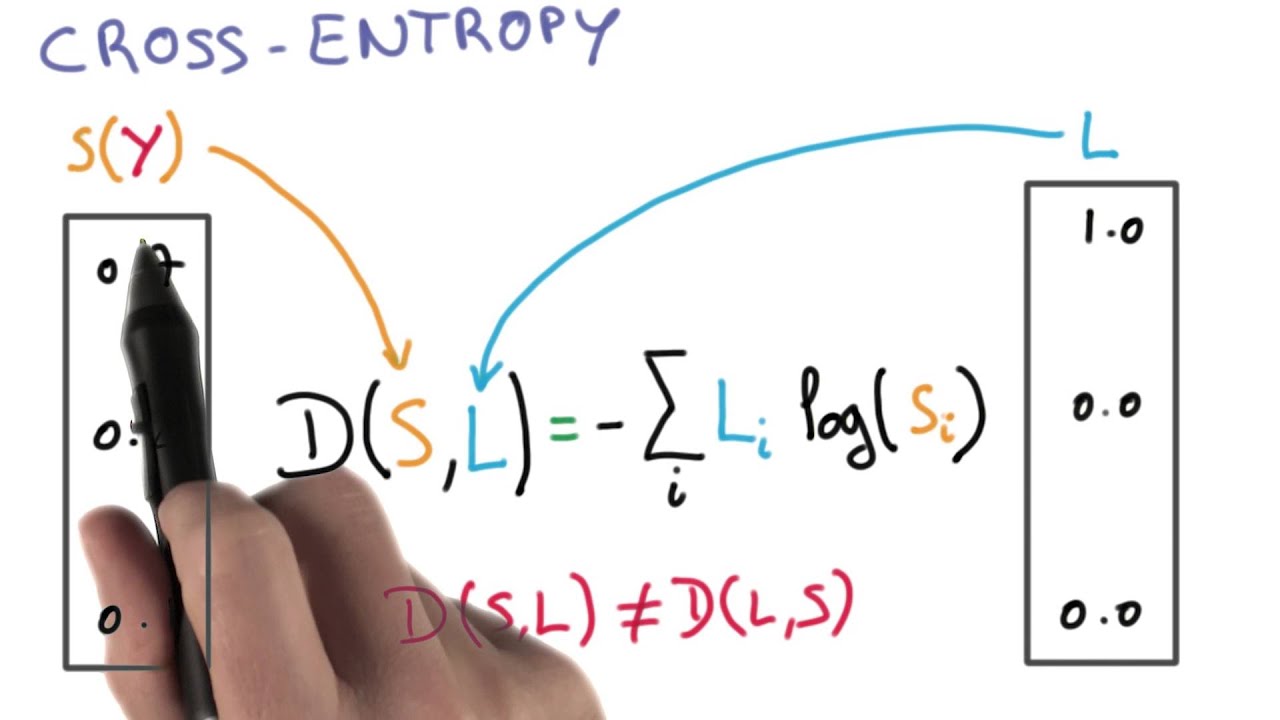

It's not obvious that the expression 57 fixes the learning slowdown problem. C 1 n x ylna + (1 y)ln(1 a), where n is the total number of items of training data, the sum is over all training inputs, x, and y is the corresponding desired output. In this case, the cross entropy of distribution p and q can be formulated as follows: 3. The term cross-entropy refers to the amount of information that exists between two probability distributions. ValueError: Expected target size (64, 17), got torch. We define the cross-entropy cost function for this neuron by. The entropy of a probability distribution p for various states of a system can be computed as follows: 2. Use this cross-entropy loss for binary (0 or 1). If you dont know then Y is the predicted probability of a given data point belonging to a particular class/label. Log-loss: Now we will move to the machine learning section. Long story short, when it comes to the calculation of the loss function (I started from the pytorch example ) I get into some problems.ĭue to the fact that I don’t use images as input, I changed the binary cross-entropy to normal cross-entropy, utilizing F.cross_entropy(reconstructed_x, x, reduction='sum') instead of F.binary_cross_entropy(reconstructed_x, x, reduction='sum') and now I get the following error message: Computes the cross-entropy loss between true labels and predicted labels. K-L divergence is equal to the difference between cross-entropy and entropy. So, overall i get an input for my network, which is of the following shape: Additionally, I use a “history” of these values, to transport information about previous values into the network. I want to use the VAE to reduce the dimensions to something smaller. Cross-entropy loss, or log loss, measures the performance of a classification model whose output is a probability value between 0 and 1.

My own problem however, does not rely on images, but on a 17 dimensional vector of continuous values. Next we show the partial derivative of the cross entropy loss function defined above. I’m currently trying to implement a VAE for dimensionality reduction purposes.Īs a base, I went on from pytorchs VAE example considering the MNIST dataset. For catogorical classification, cross entropy loss contributed by.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed